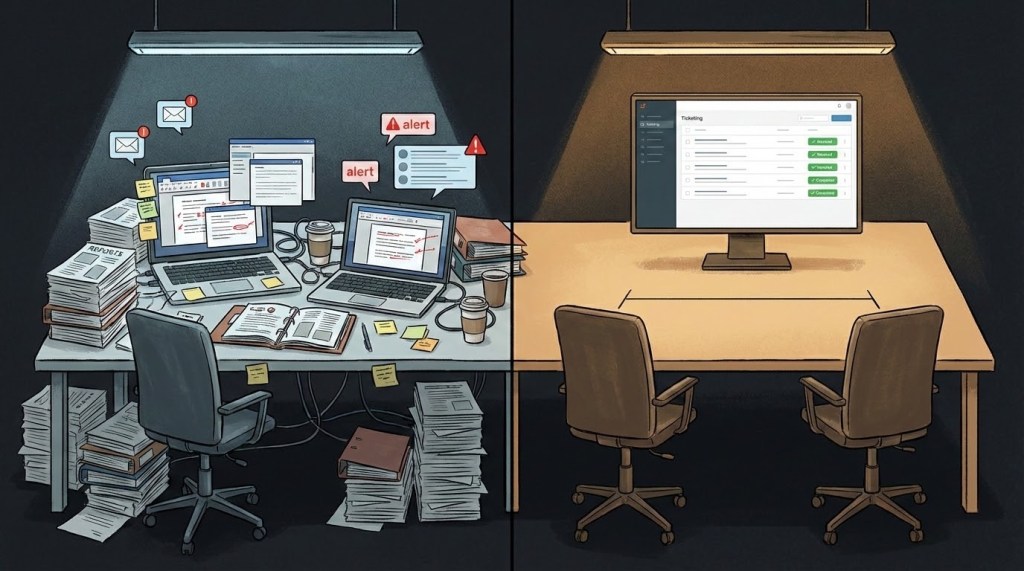

Ask any pentester how their findings get delivered and the answer is almost always the same: a PDF. Sometimes polished with a cover page and an executive summary, sometimes a Word doc hastily converted. But the mechanism hasn’t changed in decades — a document gets produced at the end of the engagement, emailed to someone on the client side, and from there it enters a chain of forwarding, filing, and forgetting that everyone in the industry recognises but nobody seriously questions.

The person who found the vulnerability and the person who needs to fix it almost never speak directly. Findings pass through project managers, team leads, and sometimes compliance officers before they reach a developer — if they reach one at all. By the time they do, the context is stripped. What’s left is a title, a severity rating, and a remediation suggestion written by someone who doesn’t know the codebase.

I didn’t question it either. Not for years.

The cost nobody questions

There’s a second layer to this problem that the industry has simply accepted as overhead.

Writing a pentest report takes days. Not the testing – the documentation. Formatting findings, writing proof-of-concept details, structuring the executive summary, running it through internal review. Then the client fixes some things, a retest happens, and the report gets rewritten. Updated findings, updated status, updated summary.

In most engagements, this isn’t a one-time cycle. It’s a series of retests – sometimes three, sometimes more – each producing an updated version of the same document. The time spent writing, reviewing, reformatting, and rewriting reports that exist only to satisfy process is time nobody’s spending on actually improving security.

Both sides pay for it. The consulting firm absorbs the labour cost of producing and revising documents. The client pays for the overhead indirectly – through engagement pricing that bakes in days of reporting alongside days of actual testing. Everyone knows this. Nobody pushes back. It’s just how it’s done.

What it looks like when it works

I spent time working inside a large product-based company- the kind of engineering environment where security isn’t a separate function that shows up once a year. Their security engineers and development teams operated in the same ecosystem. Jira for scope, planning, and ticket management. Confluence for threat models, write-ups, and documentation. Everything lived where the developers already worked.

The security team didn’t produce a PDF at the end and email it to someone. They raised findings as tickets — tagged, prioritised, assigned to the right people with enough context to act on immediately. The developer who needed to fix a vulnerability could see the finding, ask questions, and mark it resolved without waiting for a report to be rewritten.

It sounds obvious when you describe it. But coming from years of traditional consulting — where the PDF is sacred and the delivery model is “write it up, email it over, hope someone reads it” — it changed how I thought about what delivery should mean.

The question that stuck: if this works inside a tech giant, why can’t it work in consulting?

Building the model

The idea was straightforward. Instead of delivering pentest results as a static document, deliver them through the platforms development teams already use.

Every finding was raised as a Jira ticket, tagged to the right team, with full technical detail — proof of concept, exploitation steps, and specific remediation guidance — all attached to the ticket. The person who found the issue and the person responsible for fixing it were now in the same thread. Questions got answered in hours, not weeks. Context didn’t get lost in forwarding chains.

Everything else — scope documents, execution timelines, technical write-ups, executive summaries — lived on Confluence. A single source of truth that both sides could access and update. From the initial proposal through to test account creation, ticket raising, and remediation tracking, the entire engagement ran end-to-end on the client’s own platform.

The first version was built in 2022. Colleagues saw it work and started building their own demo environments. After several rounds of refinement, it was presented to a senior director at a large enterprise client in 2023. His response, unprompted: “This is Pentesting 2.0.”

Rethinking what goes in the report

Once the model was running, a second question surfaced — one that challenges an assumption the entire industry has accepted without thinking.

The common pushback on moving away from PDF reports is: “We still need one. Auditors ask for it. Our clients’ clients ask for it.” Fair enough. But nobody ever questioned what actually needs to be in that PDF.

A traditional pentest report contains everything: full technical detail, proof-of-concept exploitation steps, remediation guidance, screenshots of successful attacks. This document then gets emailed around — to project managers, to compliance teams, sometimes to external stakeholders. A working roadmap for exploiting your systems, sitting in email inboxes and shared drives across your organisation.

The people who need the technical detail — the developers who fix things — already have it. It’s in their Jira tickets, in the Confluence write-ups, in the direct thread with the person who found the issue. They don’t need a PDF.

The people who need the PDF — auditors, C-suite, external stakeholders — don’t need exploitation details. They need to know what was tested, what was found, what’s the severity, and whether it’s been fixed.

The report still gets written. Executive summaries still get produced. The final PDF still has every field that auditors, clients, and their clients expect to see — title, severity, remediation status, scope, methodology, all of it. We don’t shy away from that work.

What changes is where the full technical detail lives. Proof-of-concept steps, exploitation walkthroughs, screenshots of successful attacks — those go only to the people involved in the mitigation process, through the ticketing platform. The PDF becomes a status document, not an exploitation manual.

The result: a report that an attacker can’t use even if they get their hands on it, because the technical depth only exists behind authenticated access on the client’s own platform. And since remediation happens in real time through ticket comments — not in batches after a report is delivered — issues are typically fixed by the time the PDF exists at all.

The numbers

At one enterprise client, this model has been in production for over two years, now entering its third year.

Before adopting it, they’d used a well-known pentest-as-a-service vendor for two years. When evaluating the switch, they ran a direct comparison — same application, tested by both teams.

The previous vendor took 12 days and missed a high-severity issue along with other medium-severity findings. The new model delivered all findings in less than half the number of days. But the interesting part isn’t the speed difference — it’s where the time went.

Most pentest reports come with a disclaimer: due to limited time constraints, not all vulnerabilities may have been identified. Auditors accept this. Clients accept this. Nobody questions it. But think about what that actually means – a significant portion of the engagement window is spent on report writing, formatting, internal review, and revision cycles. That’s time taken directly away from testing. The disclaimer isn’t covering for a limitation of the tester’s skill. It’s covering for a delivery model that eats its own time budget.

In the comparison, the previous vendor missed findings that our team caught – not because they were less capable, but because when you’re spending days compiling a compliant report, those are days you’re not spending inside the application. The report was compliant. It just wasn’t helping.

The question was never “can we write a better report?” It was: why can’t the reporting be seamless enough that it gets acted on almost instantly – and still produce a compliant document at the end?

That’s what the overhead reduction actually looks like. In the traditional model, every application carried roughly 4 days of non-testing work – 2 days of report writing plus 2 days of retesting and report revision. Under the new model, retesting takes about 2 hours: the developer changes the status of the ticket, verification happens in the same comment thread, and the ticket gets closed. No report rewrite. No back-and-forth email chains. No recompilation of the same document with updated statuses. The comms that used to span weeks of emails now happen in ticket comments in real time.

Four days saved per application – for the client and the consultant. Days that go back into actual testing instead of documentation theatre. Across a year’s worth of engagements, that’s not a marginal efficiency gain – it’s a structural cost elimination.

What actually changed

The numbers matter, but they’re not the point.

What this model actually changed was the relationship between the person who finds a vulnerability and the person who fixes it. They’re in the same thread, working the same ticket, solving the same problem. The pentester isn’t a stranger who shows up once a year and drops a document. They’re a collaborator who raises a ticket, provides context, answers questions, and verifies the fix.

That shift – from adversarial handoff to collaborative workflow – is what someone meant when they called it “Pentesting 2.0.” It’s not about Jira or Confluence specifically. It’s about taking security out of isolation and putting it where the work actually happens.

When a Big Four auditor reviewed this model at a client – looking at how they handled pentests, risk management, code reviews, and the broader security programme – they didn’t just approve it. They admired the maturity of the process. That matters, because the common objection to any new delivery model is “will the auditors accept it?” In this case, the auditors thought it was better than what they usually see.

Where this applies – and where it doesn’t

I want to be clear about something: I’m not suggesting this is how the entire industry should work. It isn’t. A wide majority of pentesting engagements will not change – and for reasons that have nothing to do with whether this model is better. Some clients want a checkbox. Some engagements are one-off assessments where the relationship begins and ends with a scoped test. Some organisations don’t have the platform maturity, the internal appetite, or the trust required to make this work. That’s fine. Those engagements serve a purpose.

This model works where there’s a genuine partnership – a trusted, ongoing relationship where both sides want the security posture to actually improve, not just be documented. It works with internal teams who want to embed security into their workflow, and it works with external consultants who return to the same client repeatedly and build institutional knowledge over time. It works better in long-term, repetitive engagements where the overhead of report writing compounds quarter after quarter. That’s where the cost savings become structural, not marginal.

It also works for one-off engagements – the delivery mechanics don’t require a multi-year contract. But the deeper value, the part where developers start writing secure code because they understand the why, requires time and relationship. A five-day pentest can use this delivery model and save days on reporting. A twelve-month engagement can use it and change how a team thinks about security. Those are different outcomes from the same approach.

I didn’t build this because I think traditional pentesting is wrong. I built it because I saw what it looked like when it worked differently — and I wanted to see if that could translate to consulting. It did. But it requires something most consulting engagements don’t start with: mutual trust and a shared interest in outcomes over documentation.

Leave a comment