This is the first post in a multi-part series. Each problem described here has a story behind it — a client engagement, a failure, a turning point. Those stories are coming. This post sets the stage.

There’s a version of security consulting that looks great on paper. A pentest gets scoped, executed, and delivered as a PDF. The client receives it, files it somewhere, maybe fixes a few things, and everyone moves on until next year. The tools are there. The frameworks are there. The compliance checkboxes get ticked.

And yet — CISOs are still stressed. Dev teams and security teams still don’t talk to each other. Companies are spending six figures on tools and getting the same results they got without them. Reports that took weeks to produce sit in inboxes unread. Vulnerabilities that were “critical” on paper turn out to be irrelevant in context, while the ones that actually matter get buried in noise.

I spent the better part of four years inside this cycle — running pentests, sitting across from CISOs, working with dev teams, watching the same patterns repeat across industries and company sizes. And somewhere along the way, I stopped seeing individual client problems and started seeing systemic ones.

This post is an attempt to name them.

Not all of these are fully resolved. Some have been proven across multiple engagements. Others are still being built. I’m sharing them not as a finished playbook but as what I’ve learned from sitting close enough to these problems to understand why the obvious solutions don’t work.

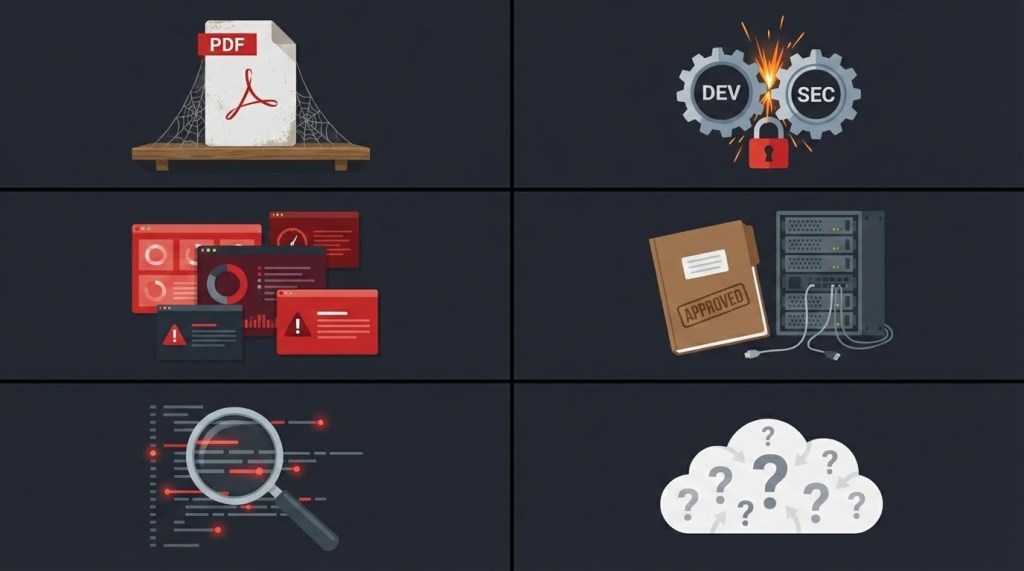

1. The Pentesting Delivery Problem

Pentesting hasn’t meaningfully evolved in decades. The delivery mechanism is still a PDF report — emailed to a project manager, forwarded to someone technical, maybe opened, maybe not. The person who finds the vulnerability and the person who needs to fix it almost never talk to each other directly.

There’s also a cost problem hiding in plain sight that the industry has simply accepted as overhead. Writing the report takes days. Reviewing it takes more. Then the client fixes some things, a retest happens, and the report gets rewritten. In most engagements, this isn’t a one-time cycle — it’s a series of retests, each producing an updated report. The time spent writing, reviewing, and rewriting documents that exist only to satisfy process is time nobody’s spending on actually improving security. And everyone pays for it without questioning it.

I saw a different model while working inside one of the largest cloud service providers. Their security engineers and dev teams operated in the same ecosystem — Jira, Confluence — sharing scope, threat models, test plans, and results in real time. Security wasn’t a separate event that happened to the dev team. It was part of the workflow.

That became the question: if this works inside a tech giant, why can’t it work in consulting?

The answer, it turned out, is that it can. It just requires rethinking what “delivery” means — from a document you hand over at the end, to a process that runs alongside the team doing the actual work.

Status: Proven. Reproduced across multiple industries. Validated by a Big Four auditor, confirmed in a head-to-head comparison against a well-known pentest-as-a-service platform, and independently described by a senior industry director as “Pentesting 2.0.”

[Full story coming → The Pentesting Delivery Problem]

2. The Dev-Sec Friction Problem

Security teams and development teams have been at odds for as long as both have existed. Security shows up, drops a list of problems, and leaves. Developers see it as criticism. Managers see it as a blocker. Everyone treats it as someone else’s problem.

Frameworks exist to fix this — on paper. In practice, the friction runs deep. It’s cultural, not procedural. You can’t solve it with a policy document or a shared Slack channel.

What I’ve seen work — consistently, across very different organisations — is making security a shared problem rather than an imposed one. Not “here’s what you did wrong” but “here’s what we’re going to fix together.” Tickets with suggested fixes, not just findings. Threat models co-authored with the developers who actually know the system. Calls where both sides are solving the same problem, not defending their territory.

At one engagement, the first call between the security team and the dev team was heated — genuinely adversarial. Six months later, it had turned into a working friendship. At another, a large enterprise presented to their leadership that for the first time, their DevOps and security operations teams were genuinely collaborating — and described it as a cultural shift.

Status: Proven. Repeatedly. The pattern holds across different industries, team sizes, and levels of initial friction.

[Full story coming → The Dev-Sec Friction Problem]

3. The Tool-Dependency Problem

The security tooling market is enormous. SAST, DAST, SCA, CSPM — there’s a product for everything. Companies spend significant budgets on these tools and still end up with the same volume of unresolved vulnerabilities.

The problem isn’t that the tools don’t work. It’s that tools alone can’t solve what is fundamentally a people and process problem. A scanner can find a vulnerability. It can’t make a developer understand why it matters. It can’t prioritise based on business context. It can’t build the habits that prevent the same class of issue from appearing again.

At one engagement, I was trusted to run a long-term security programme with zero commercial tooling. No SAST tools, no SCA scanners. Instead, the focus went into training, code reviews, developer enablement, and building a culture where secure coding wasn’t an afterthought. Hundreds of confirmed security issues were reduced to a fraction of the original count. At the end of it, you could have run any commercial scanner against those repositories and it wouldn’t have found much that the team hadn’t already handled.

The lesson wasn’t “tools are useless.” It was that empowering the team to write secure code in the first place makes the tooling question secondary.

Status: Proven at scale in one major engagement. The harder question — whether this scales without sustained hands-on involvement — is one I’m still working through.

[Full story coming → The Tool-Dependency Problem]

4. The Play-Pretend Problem

This is the one that’s hardest to talk about because it implicates the entire consulting incentive structure.

Some organisations have everything — the frameworks, the tools, the policies, the budget. And yet nothing works. Vulnerabilities pile up. Incidents repeat. Internal teams produce documentation that looks impressive but doesn’t translate into operational capability. I’ve walked into engagements where three different copies of the same vendor report sat on a shelf, alongside frameworks that were technically sound but had never been operationalised.

The uncomfortable truth is that the consulting industry sometimes benefits from this. When the incentive is billable days rather than solved problems, there’s a structural pull toward adding more frameworks, more documentation, more process layers — without ever building something that works today.

The counter to this isn’t another framework. It’s a shift in intent: build something operational on a platform the team actually uses, keep the compliance artefacts for the auditors, and measure success by whether the problem is actually getting smaller — not by how many pages the report has.

Status: Proven in terms of the collaboration breakthrough. The fuller vision — embedding operational risk management alongside vulnerability management — is still in progress.

[Full story coming → The Play-Pretend Problem]

5. The Static Analysis Problem

Every SAST tool on the market has the same fundamental limitation: it can find patterns, but it can’t understand context. The result is a flood of findings — many of them false positives, most of them lacking actionable remediation guidance, and almost none of them prioritised in a way that reflects the actual risk to the business.

Security teams spend hours triaging scanner output. Developers learn to ignore it. The tools become background noise — technically running, practically useless for decision-making.

I’ve been building a different approach: an LLM-powered code analysis tool that doesn’t just pattern-match but actually understands the codebase it’s reviewing. It classifies files by function, maps dependencies, and produces findings with contextual severity and specific remediation guidance. The architecture has been independently validated by a senior security leader at a large enterprise.

Status: Architecture validated. Currently being built. This one isn’t proven yet — it’s a thesis being tested.

[Full story coming → The Static Analysis Problem]

6. The Cloud Security Posture Problem

Hundreds of CSPM tools exist. Most of them do the same thing: scan a cloud environment and produce a list of CRITICAL and HIGH findings with no business context. None of them can generate an accurate architecture diagram of what they’re actually reviewing. The output is noise dressed up as insight.

A meaningful cloud security review starts with understanding the architecture — what’s connected to what, what’s exposed, what matters. That step is almost always manual, time-consuming, and skipped. I’ve done enough cloud architecture reviews across multi-cloud environments to know the pattern: you spend more time figuring out what’s actually deployed than you spend assessing it. The architecture diagram that should exist before the review starts is the thing you end up building during the review — every time.

That’s the gap I’m building toward closing: contextual configuration analysis grounded in an actual understanding of the environment, not just a scan of settings against a checklist. Start with architecture mapping, work outward from there.

Status: In progress. Still being built. This one exists because I got tired of solving the same problem manually on every engagement.

[Full story coming → The Cloud Security Posture Problem]

The Thread That Connects Them

These six problems look different on the surface — one is about reporting, another about tooling, another about culture. But they share a root cause: the security consulting industry has optimised for appearances over outcomes. Reports that look thorough but don’t get read. Tools that generate alerts but don’t reduce risk. Frameworks that satisfy auditors but don’t change behaviour. Teams that coexist in the same organisation but work against each other.

Each problem I’ve described was exposed not by studying the industry from the outside, but by sitting across from CISOs, developers, and security teams who were living with the consequences. The most important shift wasn’t technical — it was learning to ask “what’s actually bothering you?” instead of showing up with a predetermined solution.

That question — asked over early morning coffee calls, before the sales pitch, before the scope document — is what opened the door to every engagement where something meaningful got built. CISOs don’t need another vendor offering pentests. They need someone who understands that pentesting is often the smallest of their problems.

The stories that follow in this series are the details behind each of these problems — what exposed them, what was tried, what worked, and what’s still being figured out. They’re not case studies. They’re learnings. Some of them are messy. Some of them involve failures and wrong turns. All of them changed how I think about what security consulting should actually be.

Leave a comment